미들웨어는 에이전트 내부에서 발생하는 동작을 더욱 정밀하게 제어할 수 있는 방법을 제공합니다.

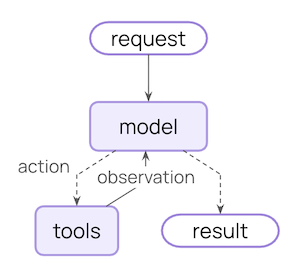

핵심 에이전트 루프는 모델 호출, 모델이 실행할 도구 선택, 그리고 더 이상 도구를 호출하지 않을 때 종료되는 과정을 포함합니다:

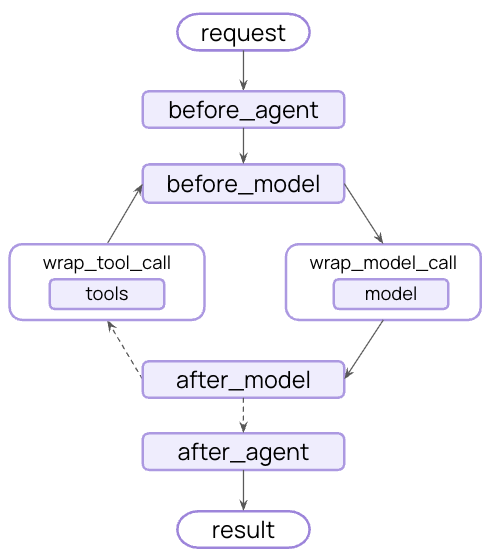

미들웨어는 이러한 각 단계의 전후에 훅을 노출합니다:

미들웨어로 할 수 있는 일

모니터링 로깅, 분석 및 디버깅을 통해 에이전트 동작을 추적합니다

수정 프롬프트, 도구 선택 및 출력 형식을 변환합니다

제어 재시도, 폴백 및 조기 종료 로직을 추가합니다

강제 속도 제한, 가드레일 및 PII 탐지를 적용합니다

create_agentfrom langchain.agents import create_agent from langchain.agents.middleware import SummarizationMiddleware, HumanInTheLoopMiddleware agent = create_agent( model = "openai:gpt-4o" , tools = [ ... ], middleware = [SummarizationMiddleware(), HumanInTheLoopMiddleware()], )

내장 미들웨어 LangChain은 일반적인 사용 사례를 위한 사전 구축된 미들웨어를 제공합니다:

토큰 제한에 근접할 때 대화 기록을 자동으로 요약합니다.

다음과 같은 경우에 적합:

컨텍스트 창을 초과하는 장기 실행 대화

광범위한 기록이 있는 다중 턴 대화

전체 대화 컨텍스트 보존이 중요한 애플리케이션

from langchain.agents import create_agent from langchain.agents.middleware import SummarizationMiddleware agent = create_agent( model = "openai:gpt-4o" , tools = [weather_tool, calculator_tool], middleware = [ SummarizationMiddleware( model = "openai:gpt-4o-mini" , max_tokens_before_summary = 4000 , # 4000 토큰에서 요약 트리거 messages_to_keep = 20 , # 요약 후 마지막 20개 메시지 유지 summary_prompt = "Custom prompt for summarization..." , # 선택사항 ), ], )

max_tokens_before_summary

요약을 트리거하는 토큰 임계값

사용자 정의 토큰 카운팅 함수. 기본값은 문자 기반 카운팅입니다.

사용자 정의 프롬프트 템플릿. 지정되지 않으면 내장 템플릿을 사용합니다.

summary_prefix

string

default: "## Previous conversation summary:"

요약 메시지의 접두사

휴먼 인 더 루프 도구 호출이 실행되기 전에 사람의 승인, 편집 또는 거부를 위해 에이전트 실행을 일시 중지합니다.

다음과 같은 경우에 적합:

사람의 승인이 필요한 고위험 작업(데이터베이스 쓰기, 금융 거래)

사람의 감독이 필수인 규정 준수 워크플로

에이전트 가이드를 위해 사람의 피드백이 사용되는 장기 실행 대화

from langchain.agents import create_agent from langchain.agents.middleware import HumanInTheLoopMiddleware from langgraph.checkpoint.memory import InMemorySaver agent = create_agent( model = "openai:gpt-4o" , tools = [read_email_tool, send_email_tool], checkpointer = InMemorySaver(), middleware = [ HumanInTheLoopMiddleware( interrupt_on = { # 이메일 전송에 대한 승인, 편집 또는 거부 필요 "send_email_tool" : { "allowed_decisions" : [ "approve" , "edit" , "reject" ], }, # 이메일 읽기 자동 승인 "read_email_tool" : False , } ), ], )

도구 이름을 승인 설정에 매핑합니다. 값은 True(기본 설정으로 중단), False(자동 승인) 또는 InterruptOnConfig 객체일 수 있습니다.

description_prefix

string

default: "Tool execution requires approval"

작업 요청 설명의 접두사

InterruptOnConfig 옵션:허용되는 결정 목록: "approve", "edit" 또는 "reject"

사용자 정의 설명을 위한 정적 문자열 또는 호출 가능 함수

중요: 휴먼 인 더 루프 미들웨어는 중단 간 상태를 유지하기 위해 체크포인터 가 필요합니다.전체 예제 및 통합 패턴은 휴먼 인 더 루프 문서 를 참조하세요. Anthropic 프롬프트 캐싱 Anthropic 모델로 반복적인 프롬프트 접두사를 캐싱하여 비용을 절감합니다.

다음과 같은 경우에 적합:

길고 반복되는 시스템 프롬프트가 있는 애플리케이션

호출 간 동일한 컨텍스트를 재사용하는 에이전트

대량 배포를 위한 API 비용 절감

from langchain_anthropic import ChatAnthropic from langchain_anthropic.middleware import AnthropicPromptCachingMiddleware from langchain.agents import create_agent LONG_PROMPT = """ Please be a helpful assistant. <Lots more context ...> """ agent = create_agent( model = ChatAnthropic( model = "claude-sonnet-4-latest" ), system_prompt = LONG_PROMPT , middleware = [AnthropicPromptCachingMiddleware( ttl = "5m" )], ) # 캐시 저장 agent.invoke({ "messages" : [HumanMessage( "Hi, my name is Bob" )]}) # 캐시 히트, 시스템 프롬프트가 캐싱됨 agent.invoke({ "messages" : [HumanMessage( "What's my name?" )]})

type

string

default: "ephemeral"

캐시 유형. 현재 "ephemeral"만 지원됩니다.

캐시된 콘텐츠의 유지 시간. 유효한 값: "5m" 또는 "1h"

unsupported_model_behavior

Anthropic이 아닌 모델 사용 시 동작. 옵션: "ignore", "warn" 또는 "raise"

모델 호출 제한 무한 루프나 과도한 비용을 방지하기 위해 모델 호출 수를 제한합니다.

다음과 같은 경우에 적합:

너무 많은 API 호출을 하는 에이전트 방지

프로덕션 배포에 대한 비용 제어 강제

특정 호출 예산 내에서 에이전트 동작 테스트

from langchain.agents import create_agent from langchain.agents.middleware import ModelCallLimitMiddleware agent = create_agent( model = "openai:gpt-4o" , tools = [ ... ], middleware = [ ModelCallLimitMiddleware( thread_limit = 10 , # 스레드당 최대 10회 호출(실행 간) run_limit = 5 , # 실행당 최대 5회 호출(단일 호출) exit_behavior = "end" , # 또는 "error"로 예외 발생 ), ], )

스레드의 모든 실행에 걸친 최대 모델 호출 수. 기본값은 제한 없음입니다.

단일 호출당 최대 모델 호출 수. 기본값은 제한 없음입니다.

제한 도달 시 동작. 옵션: "end"(정상 종료) 또는 "error"(예외 발생)

도구 호출 제한 특정 도구 또는 모든 도구에 대한 도구 호출 수를 제한합니다.

다음과 같은 경우에 적합:

비용이 많이 드는 외부 API에 대한 과도한 호출 방지

웹 검색 또는 데이터베이스 쿼리 제한

특정 도구 사용에 대한 속도 제한 강제

from langchain.agents import create_agent from langchain.agents.middleware import ToolCallLimitMiddleware # 모든 도구 호출 제한 global_limiter = ToolCallLimitMiddleware( thread_limit = 20 , run_limit = 10 ) # 특정 도구 제한 search_limiter = ToolCallLimitMiddleware( tool_name = "search" , thread_limit = 5 , run_limit = 3 , ) agent = create_agent( model = "openai:gpt-4o" , tools = [ ... ], middleware = [global_limiter, search_limiter], )

제한할 특정 도구. 제공되지 않으면 모든 도구에 제한이 적용됩니다.

스레드의 모든 실행에 걸친 최대 도구 호출 수. 기본값은 제한 없음입니다.

단일 호출당 최대 도구 호출 수. 기본값은 제한 없음입니다.

제한 도달 시 동작. 옵션: "end"(정상 종료) 또는 "error"(예외 발생)

모델 폴백 기본 모델이 실패할 때 대체 모델로 자동 폴백합니다.

다음과 같은 경우에 적합:

모델 중단을 처리하는 복원력 있는 에이전트 구축

더 저렴한 모델로 폴백하여 비용 최적화

OpenAI, Anthropic 등의 제공업체 중복성

from langchain.agents import create_agent from langchain.agents.middleware import ModelFallbackMiddleware agent = create_agent( model = "openai:gpt-4o" , # 기본 모델 tools = [ ... ], middleware = [ ModelFallbackMiddleware( "openai:gpt-4o-mini" , # 오류 시 첫 번째 시도 "anthropic:claude-3-5-sonnet-20241022" , # 그 다음 이것 ), ], )

first_model

string | BaseChatModel

required

기본 모델이 실패할 때 시도할 첫 번째 폴백 모델. 모델 문자열(예: "openai:gpt-4o-mini") 또는 BaseChatModel 인스턴스일 수 있습니다.

이전 모델이 실패할 경우 순서대로 시도할 추가 폴백 모델

PII 탐지 대화에서 개인 식별 정보를 탐지하고 처리합니다.

다음과 같은 경우에 적합:

규정 준수 요구 사항이 있는 의료 및 금융 애플리케이션

로그를 정제해야 하는 고객 서비스 에이전트

민감한 사용자 데이터를 처리하는 모든 애플리케이션

from langchain.agents import create_agent from langchain.agents.middleware import PIIMiddleware agent = create_agent( model = "openai:gpt-4o" , tools = [ ... ], middleware = [ # 사용자 입력에서 이메일 수정 PIIMiddleware( "email" , strategy = "redact" , apply_to_input = True ), # 신용카드 마스킹(마지막 4자리 표시) PIIMiddleware( "credit_card" , strategy = "mask" , apply_to_input = True ), # 정규식으로 사용자 정의 PII 유형 PIIMiddleware( "api_key" , detector = r "sk- [ a-zA-Z0-9 ] {32} " , strategy = "block" , # 탐지되면 오류 발생 ), ], )

탐지할 PII 유형. 내장 유형(email, credit_card, ip, mac_address, url) 또는 사용자 정의 유형 이름일 수 있습니다.

탐지된 PII 처리 방법. 옵션:

"block" - 탐지 시 예외 발생"redact" - [REDACTED_TYPE]으로 대체"mask" - 부분 마스킹(예: ****-****-****-1234)"hash" - 결정적 해시로 대체 사용자 정의 탐지기 함수 또는 정규식 패턴. 제공되지 않으면 PII 유형에 대한 내장 탐지기를 사용합니다.

복잡한 다단계 작업을 위한 할 일 목록 관리 기능을 추가합니다.

이 미들웨어는 에이전트에게 write_todos 도구와 효과적인 작업 계획을 안내하는 시스템 프롬프트를 자동으로 제공합니다.

from langchain.agents import create_agent from langchain.agents.middleware import TodoListMiddleware from langchain.messages import HumanMessage agent = create_agent( model = "openai:gpt-4o" , tools = [ ... ], middleware = [TodoListMiddleware()], ) result = agent.invoke({ "messages" : [HumanMessage( "Help me refactor my codebase" )]}) print (result[ "todos" ]) # 상태 추적이 있는 할 일 항목 배열

할 일 사용을 안내하는 사용자 정의 시스템 프롬프트. 지정되지 않으면 내장 프롬프트를 사용합니다.

write_todos 도구에 대한 사용자 정의 설명. 지정되지 않으면 내장 설명을 사용합니다.

LLM 도구 선택기 메인 모델을 호출하기 전에 LLM을 사용하여 관련 도구를 지능적으로 선택합니다.

다음과 같은 경우에 적합:

대부분이 쿼리당 관련이 없는 많은 도구(10개 이상)가 있는 에이전트

관련 없는 도구를 필터링하여 토큰 사용량 줄이기

모델 집중도 및 정확도 향상

from langchain.agents import create_agent from langchain.agents.middleware import LLMToolSelectorMiddleware agent = create_agent( model = "openai:gpt-4o" , tools = [tool1, tool2, tool3, tool4, tool5, ... ], # 많은 도구 middleware = [ LLMToolSelectorMiddleware( model = "openai:gpt-4o-mini" , # 선택에 더 저렴한 모델 사용 max_tools = 3 , # 가장 관련성 높은 도구 3개로 제한 always_include = [ "search" ], # 특정 도구 항상 포함 ), ], )

도구 선택을 위한 모델. 모델 문자열 또는 BaseChatModel 인스턴스일 수 있습니다. 기본값은 에이전트의 메인 모델입니다.

선택 모델을 위한 지침. 지정되지 않으면 내장 프롬프트를 사용합니다.

선택할 최대 도구 수. 기본값은 제한 없음입니다.

도구 재시도 설정 가능한 지수 백오프로 실패한 도구 호출을 자동으로 재시도합니다.

다음과 같은 경우에 적합:

외부 API 호출에서 일시적인 실패 처리

네트워크 의존 도구의 신뢰성 향상

일시적인 오류를 우아하게 처리하는 복원력 있는 에이전트 구축

from langchain.agents import create_agent from langchain.agents.middleware import ToolRetryMiddleware agent = create_agent( model = "openai:gpt-4o" , tools = [search_tool, database_tool], middleware = [ ToolRetryMiddleware( max_retries = 3 , # 최대 3회 재시도 backoff_factor = 2.0 , # 지수 백오프 배수 initial_delay = 1.0 , # 1초 지연으로 시작 max_delay = 60.0 , # 최대 60초로 지연 제한 jitter = True , # 썬더링 허드 방지를 위한 무작위 지터 추가 ), ], )

초기 호출 후 최대 재시도 횟수(기본값으로 총 3회 시도)

재시도 로직을 적용할 도구 또는 도구 이름의 선택적 목록. None이면 모든 도구에 적용됩니다.

retry_on

tuple[type[Exception], ...] | callable

default: "(Exception,)"

재시도할 예외 유형의 튜플 또는 예외를 받아 재시도 여부를 반환하는 호출 가능 함수.

on_failure

string | callable

default: "return_message"

모든 재시도가 소진되었을 때의 동작. 옵션:

"return_message" - 오류 세부 정보가 있는 ToolMessage 반환(LLM이 실패를 처리하도록 허용)"raise" - 예외 다시 발생(에이전트 실행 중지)사용자 정의 호출 가능 - 예외를 받아 ToolMessage 콘텐츠에 대한 문자열을 반환하는 함수

지수 백오프를 위한 배수. 각 재시도는 initial_delay * (backoff_factor ** retry_number) 초를 기다립니다. 일정한 지연을 위해 0.0으로 설정합니다.

재시도 간 최대 지연(초)(지수 백오프 성장 제한)

썬더링 허드를 방지하기 위해 지연에 무작위 지터(±25%)를 추가할지 여부

LLM 도구 에뮬레이터 테스트 목적으로 LLM을 사용하여 도구 실행을 에뮬레이트하고, 실제 도구 호출을 AI가 생성한 응답으로 대체합니다.

다음과 같은 경우에 적합:

실제 도구를 실행하지 않고 에이전트 동작 테스트

외부 도구를 사용할 수 없거나 비용이 많이 드는 경우 에이전트 개발

실제 도구를 구현하기 전에 에이전트 워크플로 프로토타이핑

from langchain.agents import create_agent from langchain.agents.middleware import LLMToolEmulator agent = create_agent( model = "openai:gpt-4o" , tools = [get_weather, search_database, send_email], middleware = [ # 기본적으로 모든 도구 에뮬레이트 LLMToolEmulator(), # 또는 특정 도구 에뮬레이트 # LLMToolEmulator(tools=["get_weather", "search_database"]), # 또는 에뮬레이션을 위한 사용자 정의 모델 사용 # LLMToolEmulator(model="anthropic:claude-3-5-sonnet-latest"), ], )

에뮬레이트할 도구 이름(str) 또는 BaseTool 인스턴스의 목록. None(기본값)이면 모든 도구가 에뮬레이트됩니다. 빈 목록이면 도구가 에뮬레이트되지 않습니다.

model

string | BaseChatModel

default: "anthropic:claude-3-5-sonnet-latest"

에뮬레이트된 도구 응답을 생성하는 데 사용할 모델. 모델 식별자 문자열 또는 BaseChatModel 인스턴스일 수 있습니다.

컨텍스트 편집 도구 사용을 트리밍, 요약 또는 지우는 방식으로 대화 컨텍스트를 관리합니다.

다음과 같은 경우에 적합:

정기적인 컨텍스트 정리가 필요한 긴 대화

컨텍스트에서 실패한 도구 시도 제거

사용자 정의 컨텍스트 관리 전략

from langchain.agents import create_agent from langchain.agents.middleware import ContextEditingMiddleware, ClearToolUsesEdit agent = create_agent( model = "openai:gpt-4o" , tools = [ ... ], middleware = [ ContextEditingMiddleware( edits = [ ClearToolUsesEdit( max_tokens = 1000 ), # 오래된 도구 사용 지우기 ], ), ], )

edits

list[ContextEdit]

default: "[ClearToolUsesEdit()]"

적용할 ContextEdit 전략 목록

token_count_method

string

default: "approximate"

토큰 카운팅 방법. 옵션: "approximate" 또는 "model"

ClearToolUsesEditplaceholder

string

default: "[cleared]"

지워진 출력에 대한 플레이스홀더 텍스트

사용자 정의 미들웨어 에이전트 실행 흐름의 특정 지점에서 실행되는 훅을 구현하여 사용자 정의 미들웨어를 구축합니다.

미들웨어를 두 가지 방법으로 생성할 수 있습니다:

데코레이터 기반 - 단일 훅 미들웨어에 빠르고 간단함클래스 기반 - 여러 훅을 사용하는 복잡한 미들웨어에 더 강력함

데코레이터 기반 미들웨어 단일 훅만 필요한 간단한 미들웨어의 경우, 데코레이터가 기능을 추가하는 가장 빠른 방법을 제공합니다:

from langchain.agents.middleware import before_model, after_model, wrap_model_call from langchain.agents.middleware import AgentState, ModelRequest, ModelResponse, dynamic_prompt from langchain.messages import AIMessage from langchain.agents import create_agent from langgraph.runtime import Runtime from typing import Any, Callable # 노드 스타일: 모델 호출 전 로깅 @before_model def log_before_model ( state : AgentState, runtime : Runtime) -> dict[ str , Any] | None : print ( f "About to call model with { len (state[ 'messages' ]) } messages" ) return None # 노드 스타일: 모델 호출 후 유효성 검사 @after_model ( can_jump_to = [ "end" ]) def validate_output ( state : AgentState, runtime : Runtime) -> dict[ str , Any] | None : last_message = state[ "messages" ][ - 1 ] if "BLOCKED" in last_message.content: return { "messages" : [AIMessage( "I cannot respond to that request." )], "jump_to" : "end" } return None # 랩 스타일: 재시도 로직 @wrap_model_call def retry_model ( request : ModelRequest, handler : Callable[[ModelRequest], ModelResponse], ) -> ModelResponse: for attempt in range ( 3 ): try : return handler(request) except Exception as e: if attempt == 2 : raise print ( f "Retry { attempt + 1 } /3 after error: { e } " ) # 랩 스타일: 동적 프롬프트 @dynamic_prompt def personalized_prompt ( request : ModelRequest) -> str : user_id = request.runtime.context.get( "user_id" , "guest" ) return f "You are a helpful assistant for user { user_id } . Be concise and friendly." # 에이전트에서 데코레이터 사용 agent = create_agent( model = "openai:gpt-4o" , middleware = [log_before_model, validate_output, retry_model, personalized_prompt], tools = [ ... ], )

사용 가능한 데코레이터 노드 스타일 (특정 실행 지점에서 실행):랩 스타일 (실행을 가로채고 제어):편의 데코레이터 :데코레이터 사용 시기

데코레이터 사용 시 • 단일 훅 필요

클래스 사용 시 • 여러 훅 필요

클래스 기반 미들웨어 두 가지 훅 스타일

노드 스타일 훅 특정 실행 지점에서 순차적으로 실행됩니다. 로깅, 유효성 검사 및 상태 업데이트에 사용합니다.

랩 스타일 훅 핸들러 호출을 완전히 제어하여 실행을 가로챕니다. 재시도, 캐싱 및 변환에 사용합니다.

노드 스타일 훅 실행 흐름의 특정 지점에서 실행됩니다:

before_agent - 에이전트 시작 전(호출당 한 번)before_model - 각 모델 호출 전after_model - 각 모델 응답 후after_agent - 에이전트 완료 후(호출당 최대 한 번)

예제: 로깅 미들웨어 from langchain.agents.middleware import AgentMiddleware, AgentState from langgraph.runtime import Runtime from typing import Any class LoggingMiddleware ( AgentMiddleware ): def before_model ( self , state : AgentState, runtime : Runtime) -> dict[ str , Any] | None : print ( f "About to call model with { len (state[ 'messages' ]) } messages" ) return None def after_model ( self , state : AgentState, runtime : Runtime) -> dict[ str , Any] | None : print ( f "Model returned: { state[ 'messages' ][ - 1 ].content } " ) return None

예제: 대화 길이 제한 from langchain.agents.middleware import AgentMiddleware, AgentState from langchain.messages import AIMessage from langgraph.runtime import Runtime from typing import Any class MessageLimitMiddleware ( AgentMiddleware ): def __init__ ( self , max_messages : int = 50 ): super (). __init__ () self .max_messages = max_messages def before_model ( self , state : AgentState, runtime : Runtime) -> dict[ str , Any] | None : if len (state[ "messages" ]) == self .max_messages: return { "messages" : [AIMessage( "Conversation limit reached." )], "jump_to" : "end" } return None

랩 스타일 훅 실행을 가로채고 핸들러 호출 시점을 제어합니다:

wrap_model_call - 각 모델 호출 주변wrap_tool_call - 각 도구 호출 주변

핸들러를 0회(단락), 1회(정상 흐름) 또는 여러 번(재시도 로직) 호출할지 결정합니다.

예제: 모델 재시도 미들웨어 from langchain.agents.middleware import AgentMiddleware, ModelRequest, ModelResponse from typing import Callable class RetryMiddleware ( AgentMiddleware ): def __init__ ( self , max_retries : int = 3 ): super (). __init__ () self .max_retries = max_retries def wrap_model_call ( self , request : ModelRequest, handler : Callable[[ModelRequest], ModelResponse], ) -> ModelResponse: for attempt in range ( self .max_retries): try : return handler(request) except Exception as e: if attempt == self .max_retries - 1 : raise print ( f "Retry { attempt + 1 } / { self .max_retries } after error: { e } " )

예제: 동적 모델 선택 from langchain.agents.middleware import AgentMiddleware, ModelRequest, ModelResponse from langchain.chat_models import init_chat_model from typing import Callable class DynamicModelMiddleware ( AgentMiddleware ): def wrap_model_call ( self , request : ModelRequest, handler : Callable[[ModelRequest], ModelResponse], ) -> ModelResponse: # 대화 길이에 따라 다른 모델 사용 if len (request.messages) > 10 : request.model = init_chat_model( "openai:gpt-4o" ) else : request.model = init_chat_model( "openai:gpt-4o-mini" ) return handler(request)

예제: 도구 호출 모니터링 from langchain.tools.tool_node import ToolCallRequest from langchain.agents.middleware import AgentMiddleware from langchain_core.messages import ToolMessage from langgraph.types import Command from typing import Callable class ToolMonitoringMiddleware ( AgentMiddleware ): def wrap_tool_call ( self , request : ToolCallRequest, handler : Callable[[ToolCallRequest], ToolMessage | Command], ) -> ToolMessage | Command: print ( f "Executing tool: { request.tool_call[ 'name' ] } " ) print ( f "Arguments: { request.tool_call[ 'args' ] } " ) try : result = handler(request) print ( f "Tool completed successfully" ) return result except Exception as e: print ( f "Tool failed: { e } " ) raise

사용자 정의 상태 스키마 미들웨어는 사용자 정의 속성으로 에이전트의 상태를 확장할 수 있습니다. 사용자 정의 상태 타입을 정의하고 state_schema로 설정합니다:

from langchain.agents.middleware import AgentState, AgentMiddleware from typing_extensions import NotRequired from typing import Any class CustomState ( AgentState ): model_call_count: NotRequired[ int ] user_id: NotRequired[ str ] class CallCounterMiddleware (AgentMiddleware[CustomState]): state_schema = CustomState def before_model ( self , state : CustomState, runtime ) -> dict[ str , Any] | None : # 사용자 정의 상태 속성 접근 count = state.get( "model_call_count" , 0 ) if count > 10 : return { "jump_to" : "end" } return None def after_model ( self , state : CustomState, runtime ) -> dict[ str , Any] | None : # 사용자 정의 상태 업데이트 return { "model_call_count" : state.get( "model_call_count" , 0 ) + 1 }

agent = create_agent( model = "openai:gpt-4o" , middleware = [CallCounterMiddleware()], tools = [ ... ], ) # 사용자 정의 상태로 호출 result = agent.invoke({ "messages" : [HumanMessage( "Hello" )], "model_call_count" : 0 , "user_id" : "user-123" , })

실행 순서 여러 미들웨어를 사용할 때 실행 순서를 이해하는 것이 중요합니다:

agent = create_agent( model = "openai:gpt-4o" , middleware = [middleware1, middleware2, middleware3], tools = [ ... ], )

Before 훅은 순서대로 실행됩니다:

middleware1.before_agent()middleware2.before_agent()middleware3.before_agent() 에이전트 루프 시작

middleware1.before_model()middleware2.before_model()middleware3.before_model() Wrap 훅은 함수 호출처럼 중첩됩니다:

middleware1.wrap_model_call() → middleware2.wrap_model_call() → middleware3.wrap_model_call() → model After 훅은 역순으로 실행됩니다:

middleware3.after_model()middleware2.after_model()middleware1.after_model() 에이전트 루프 종료

middleware3.after_agent()middleware2.after_agent()middleware1.after_agent() 주요 규칙:

before_* 훅: 처음부터 마지막까지after_* 훅: 마지막부터 처음까지(역순)wrap_* 훅: 중첩됨(첫 번째 미들웨어가 다른 모든 미들웨어를 감쌈)

에이전트 점프 미들웨어에서 조기에 종료하려면 jump_to가 포함된 딕셔너리를 반환합니다:

class EarlyExitMiddleware ( AgentMiddleware ): def before_model ( self , state : AgentState, runtime ) -> dict[ str , Any] | None : # 조건 확인 if should_exit(state): return { "messages" : [AIMessage( "Exiting early due to condition." )], "jump_to" : "end" } return None

사용 가능한 점프 대상:

"end": 에이전트 실행의 끝으로 점프"tools": 도구 노드로 점프"model": 모델 노드로 점프(또는 첫 번째 before_model 훅)

중요: before_model 또는 after_model에서 점프할 때, "model"로 점프하면 모든 before_model 미들웨어가 다시 실행됩니다.점프를 활성화하려면 훅을 @hook_config(can_jump_to=[...])로 장식합니다:

from langchain.agents.middleware import AgentMiddleware, hook_config from typing import Any class ConditionalMiddleware ( AgentMiddleware ): @hook_config ( can_jump_to = [ "end" , "tools" ]) def after_model ( self , state : AgentState, runtime ) -> dict[ str , Any] | None : if some_condition(state): return { "jump_to" : "end" } return None

모범 사례

미들웨어를 집중적으로 유지 - 각각 한 가지 일을 잘 수행해야 함

오류를 우아하게 처리 - 미들웨어 오류로 인해 에이전트가 중단되지 않도록 함

적절한 훅 타입 사용 :

순차적 로직(로깅, 유효성 검사)에는 노드 스타일

제어 흐름(재시도, 폴백, 캐싱)에는 랩 스타일

사용자 정의 상태 속성을 명확하게 문서화

통합하기 전에 미들웨어를 독립적으로 단위 테스트

실행 순서 고려 - 중요한 미들웨어를 목록의 첫 번째에 배치

가능한 경우 내장 미들웨어 사용, 바퀴를 재발명하지 마세요 :)

도구 동적 선택 성능 및 정확도를 향상시키기 위해 런타임에 관련 도구를 선택합니다.

이점:

더 짧은 프롬프트 - 관련 도구만 노출하여 복잡성 감소더 나은 정확도 - 모델이 더 적은 옵션에서 올바르게 선택권한 제어 - 사용자 액세스에 따라 도구를 동적으로 필터링 from langchain.agents import create_agent from langchain.agents.middleware import AgentMiddleware, ModelRequest from typing import Callable class ToolSelectorMiddleware ( AgentMiddleware ): def wrap_model_call ( self , request : ModelRequest, handler : Callable[[ModelRequest], ModelResponse], ) -> ModelResponse: """상태/컨텍스트에 따라 관련 도구를 선택하는 미들웨어.""" # 상태/컨텍스트에 따라 작고 관련성 높은 도구 하위 집합 선택 relevant_tools = select_relevant_tools(request.state, request.runtime) request.tools = relevant_tools return handler(request) agent = create_agent( model = "openai:gpt-4o" , tools = all_tools, # 사용 가능한 모든 도구를 미리 등록해야 함 # 미들웨어를 사용하여 주어진 실행에 관련된 더 작은 하위 집합을 선택할 수 있습니다. middleware = [ToolSelectorMiddleware()], )

Show 확장 예제: GitHub vs GitLab 도구 선택

from dataclasses import dataclass from typing import Literal, Callable from langchain.agents import create_agent from langchain.agents.middleware import AgentMiddleware, ModelRequest, ModelResponse from langchain_core.tools import tool @tool def github_create_issue ( repo : str , title : str ) -> dict : """GitHub 저장소에 이슈를 생성합니다.""" return { "url" : f "https://github.com/ { repo } /issues/1" , "title" : title} @tool def gitlab_create_issue ( project : str , title : str ) -> dict : """GitLab 프로젝트에 이슈를 생성합니다.""" return { "url" : f "https://gitlab.com/ { project } /-/issues/1" , "title" : title} all_tools = [github_create_issue, gitlab_create_issue] @dataclass class Context : provider: Literal[ "github" , "gitlab" ] class ToolSelectorMiddleware ( AgentMiddleware ): def wrap_model_call ( self , request : ModelRequest, handler : Callable[[ModelRequest], ModelResponse], ) -> ModelResponse: """VCS 제공업체에 따라 도구를 선택합니다.""" provider = request.runtime.context.provider if provider == "gitlab" : selected_tools = [t for t in request.tools if t.name == "gitlab_create_issue" ] else : selected_tools = [t for t in request.tools if t.name == "github_create_issue" ] request.tools = selected_tools return handler(request) agent = create_agent( model = "openai:gpt-4o" , tools = all_tools, middleware = [ToolSelectorMiddleware()], context_schema = Context, ) # GitHub 컨텍스트로 호출 agent.invoke( { "messages" : [{ "role" : "user" , "content" : "Open an issue titled 'Bug: where are the cats' in the repository `its-a-cats-game`" }] }, context = Context( provider = "github" ), )

주요 포인트:

모든 도구를 미리 등록

미들웨어가 요청당 관련 하위 집합 선택

설정 요구 사항에 context_schema 사용

추가 리소스